Multiple frameworks in, multiple precisions out — on a clean MLIR foundation that's easy to extend.

Multi-framework frontends

PyTorch, ONNX, TFLite and Caffe out of the box. Other frameworks can be exported via ONNX.

HuggingFace LLMs

First-class support for HuggingFace LLMs — including AWQ, GPTQ and AutoRound variants — through a single llm_convert.py.

Full precision matrix

F32 / F16 / BF16 / INT8 / W4A16 / W8A16 — including post-training calibration for INT8.

Multi-target codegen

Targets Sophgo TPU processors such as bm1684x and bm1688 from the same MLIR pipeline.

Layer-Group optimization

Aggressive graph and tensor-level optimizations — Layer Group, fused preprocess and postprocess — keep TPUs busy and memory traffic low.

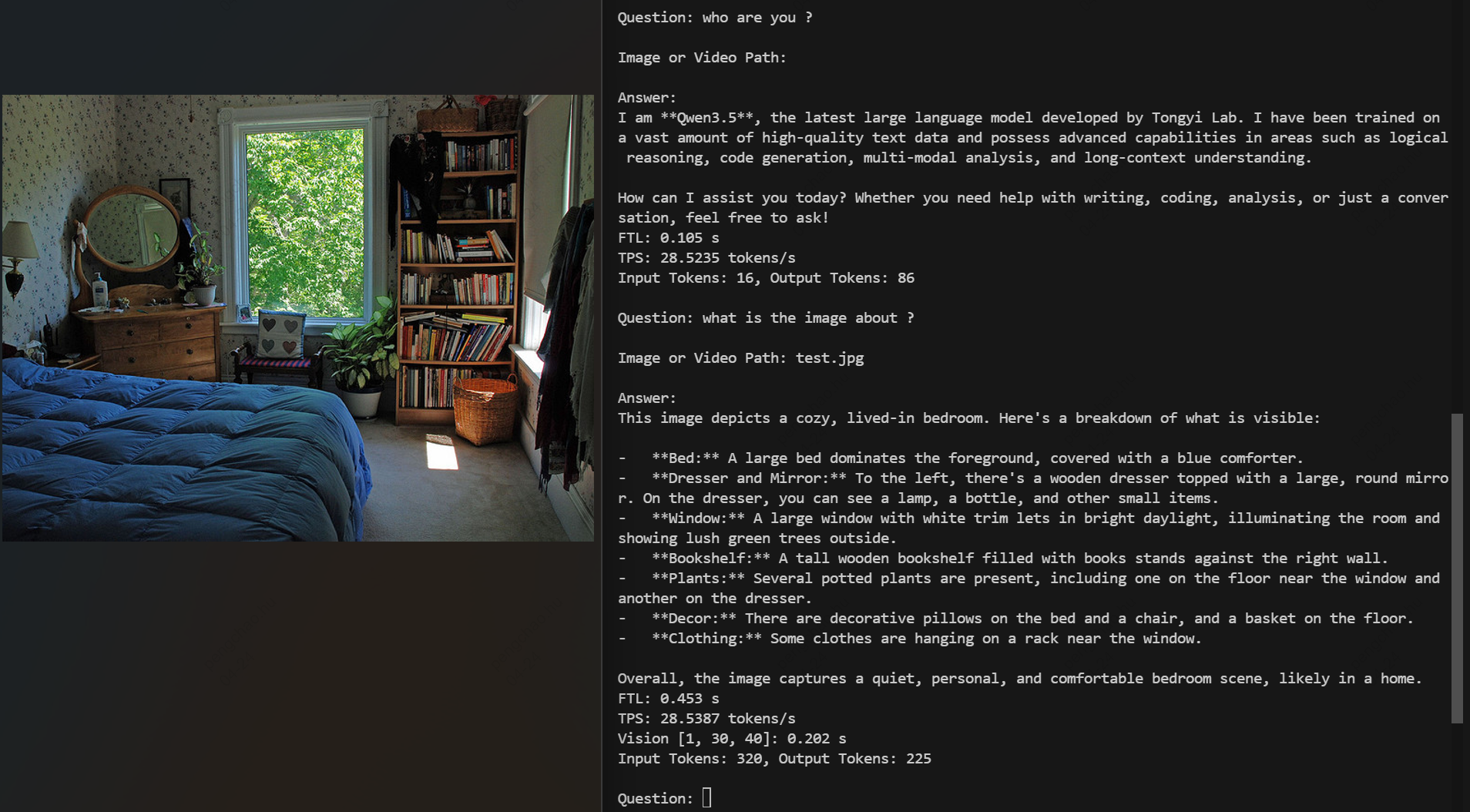

120+ models verified

Vision (YOLO family), speech (Whisper) and large language models (Qwen3.5, Qwen3-VL, MiniCPM-V-4, …) ship in the regression set.